Projects

The Discovery Project

|

| Copyright Kip Loades |

The Discovery project will enable community groups in Cambridge to enjoy and learn more about the monuments, wildlife and local history in the mill road cemetery. We will design a novel collaborative experience that will allow school children, wildlife and local history groups to discover and make connections, between what they observe in the physical space and what they can find out in online libraries, websites and books.

People working in pairs will be provided with a mobile phone to access the Discovery website and will be instructed to explore part of the cemetery, looking for specific things related to a theme (e.g. people who have died under the age of 50, early signs of spring, famous people). On finding something of interest, they will take a photo, label it and add comments, which can then be shared with others from their group who are located indoors. They will also be able to communicate with the indoor group, who have access to a state of the art multi-touch tabletop, as well as tablet and laptop computers, that they can use to access other online resources.

A map of the cemetery will be presented on the tabletop showing the location and details of the head stones. It will also track and display where the groups are in the cemetery at a given time. The groups out there can also access this information on their mobile phones, as well as the related photos and their notes. Together the ‘out there’ and ‘in here’ groups will work together to create a rich, multi-layered and interconnected history of the site for a particular theme.

Yvonne Rogers, Anne Adams, Tim Coughlan, Trevor Collins, Janet Van der Linden, Pablo Haya and Estafania Martin

Animal Interaction

|

Although we have involved animals in machine interactions for a long time, the study of those interactions and their effects on animal users is yet to enter mainstream user-computer interaction research. Animal-Computer Interaction aims to make up for this lack of animal perspective while expanding the boundaries of user-interaction research and interaction design, in order to enhance our inter-species relationships with the animals we live or work with, advance our understanding of animal behaviour and cognition, render conservation efforts more effective, improving the ethical as well as economical sustainability of food production and, in the process, benefit different groups of human users too.

Clara Mancini and Janet van der Linden.

The Question

The Question is an immersive theatre project that explores haptic technology in relation to navigation, perception and knowledge. This innovative collaboration between engineers, artists and academics invites participants to explore a tactile and audio environment in the pitch dark. Blind and sighted audience members will be guided by a haptic device, which uses robotics technology and infrared sensors to change its form in response to their journey through the installation.

The Question focuses on the senses of touch and hearing, and locates the drama of the narrative in the body of each participant. The story is a fragmented recounting of an abstract blind character, struggling with scientific, philosophical and cultural questions of sensory translation, knowledge and the ultimate impact of these on individual identity.

Janet van der Linden and Yvonne Rogers are working with Extant (the UK's only professional performing arts company of visually impaired artists), and Battersea Arts Centre (a space for cutting-edge theatre). This project is funded by the Technology Strategy Board, Creative Industries Fast Track.

Change project (2010-2011)

The overarching research theme of this project is to explore the feasibility of using ubiquitous technologies to engender change in people's everyday habits. We will promote 'proactive' and 'provocative' interactions with the world by investigating whether and how a diversity of augmentation devices can encourage, enable or enforce people to change their behaviours in response to a particular desired human value, with a focus on environmental change. The project will design and build prototypes and evaluate them in the wild, using novel affordable technologies, including wearables with micro-projectors, ambient displays and sensor-based devices. Unconventional and interdisciplinary methods will be used to create and test novel prototypes that can persuade or coerce people to change their individual and collective everyday habits, be it food shopping, selecting one's wardrobe or engaging in leisure activities. The research is intended to be 'edgy' and 'informative' raising contentious ethical and political issues with the general public including issues of control, privacy and trust.

This project is in collaboration with Nottingham University (Tom Rodden), Sussex University (Dan Chalmers) and Goldsmiths (Bill Gaver) and is funded by the EPSRC. The team at the OU is led by Yvonne Rogers

"OTIH: Out there and in here" project (2009-2011)

The goal of this project is to develop technologies that support people working together in a suitable manner for their locations. One of the benefits of mobile technologies is to combine 'the digital' (e.g., data, information, photos) with user experiences in novel ways that are contextualized by peoples current physical activities. However, people with mobility disabilities or socio-economic limitations are often excluded from engaging in such peripatetic user experiences. Watching the world go by on a TV or computer screen may be engaging but it doesn't support a student's interaction with the physical world. This project bridges this gap by developing hybrid 'social inclusion' systems supporting co-active participation between mixed teams of physically able and disabled users, enabling experiences of both being in the field and at a stationary base. To this end, mobile and tabletop technologies will be linked to support synchronous distributed team collaboration. A prototype system will be developed and evaluated in situ to demonstrate the benefits of technology-interlinked with socially interdependent experiences.

The team at the OU is led by Anne Adams (IET) and comprises Yvonne Rogers, Trevor Collins, Sarah-Jane Davies and Stephen Swithenby, who will be working with Abigail Sellen (Microsoft) and Dan Phillips (OOKL Software).This project is funded (by RCUK / EPSRC) under the Digital Economies "In the Wild" initiative.

ShareIT Project

The interdisciplinary ShareIT project, funded by the UK's EPSRC (2007-2010), is investigating how a new generation of shareable technologies, that are designed specifically for more than one person to use at a time, can enable groups to collaborate more effectively - be it learning maths, planning seating allocation, conducting financial forecasting or socialising. The technologies include gesture-based wall displays, multi-touch tabletops and interactive tangibles.

Persuasive Ambient Displays

This project is investigating how physical installations can be an effective form of persuasive technology. Our focus is on how novel ambient displays embedded in open plan buildings can trigger and promote behavioural change, that is inspired by theories of fast and frugal heuristics and reflection. We have developed an integrated approach comprising (i) an abstract display that lures and nudges people towards a desired behavior at the point of individual decision-making and (ii) an ambient public display that enables people to reflect upon theirs and others' aggregate behaviour. The 'clouds and lights' project follows this approach and is aimed at people in a workplace who have the choice of taking the stairs versus the elevator.

A video on this work will soon appear on the OU iTunes site.

e-sense

This project, funded as part of the AHRC's speculative research programme in collaboration with Andy Clark at the University of Edinburgh is concerned with the idea of the extended mind and explores how we can extend our senses through designing novel technologies. It started in September 2008 and will run until the end of March 2010.

MusicJacket

We are developing a system for novice violin players that helps them to learn how to hold their instrument correctly and good bowing action. We work closely with violin teachers and our system is designed to support conventional teaching methods.

We use an Animazoo IGS-190 inertial motion capture system to track the position of the violin and the trajectory of the bow. We use vibrotactile feedack to inform the student when either they are holding their violin incorrectly or their bowing trajectory has deviated from the desired path. We are investigating whether this system motivates students to practice and improves their technique.

PRIMMA

Location-based services, mobile devices and tracking and monitoring devices being developed that have a range of potential applications, from supporting mobile learning to remote health monitoring of the elderly and chronically ill. However, do users actually understand how much of their personal information is being shared with others? In general, there will be a trade off between usefulness of disclosing private information and the risk of it being misused. This project is concerned with developing techniques for protecting the private information typically generated from ubiquitous computing applications from malicious or accidental misuse. Privacy requirements leading to a Privacy Rights Management (PRM) framework are being developed for a specific set of ubiquitous computing technologies.

The project is a collaboration between researchers at the OU and Morris Sloman's group at Imperial. The team at the OU is led by Professor Bashar Nuseibeh.

Ubicomp Grand Challenge

The shift to Ubiquitous Computing from Information Technology is a challenge that affects all aspects of computer science and has massive implications for how we might reason about, build and experience computer systems in the future.

The scale of problems to be addressed requires us to tackle this research at a global scale requiring us to shape a multidisciplinary international community in order to tackle the grand challenge of ubiquitous computing. This project, funded by the EPSRC (October 2007 - March 2008) is putting into place the multidisciplinary and international collaborations between world-leading researchers necessary to launch a coordinated international response to the challenge of Ubiquitous Computing. In doing so we aim to lay the foundation required to understand, design and realize future large scale Ubiquitous Computing arrangements that will be embedded in the world we inhabit and shape the ways in which we all live.

Project partners are based at Bath, Cambridge, Glasgow, Imperial, Nottingham, Oxford, Southampton and Sussex.

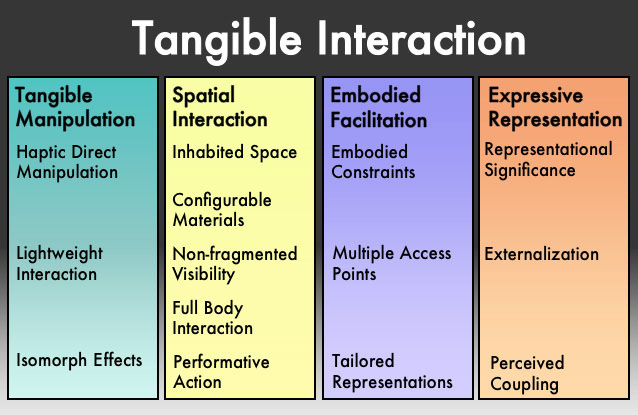

Tangible Interaction

The project was based upon an existing tangible interaction framework, extending and exploring it. Research was based mainly at the OU, but augmented by a range of short-term research collaborations with external partners. Collaboration at the OU was primarily with the ShareIT project.